Chapter 15: Responsible AI Prompting

Ethical Principles, Bias Awareness, and Social Responsibility

Learning Objectives

After completing this chapter, you will be able to:

- Apply ethical principles to AI prompting decisions

- Identify and mitigate bias in prompts and outputs

- Ensure transparency and explainability

- Consider social impact of AI interactions

- Implement responsible AI practices in your work

The Responsibility Framework

Why Responsible AI Matters

AI systems amplify human decisions at scale. Prompts that seem harmless can produce harmful outcomes when applied broadly.

| Small Decision | Big Impact |

|---|---|

| One biased prompt | Thousands of biased outputs |

| One careless phrase | Scaled misinformation |

| One oversight | Systematic discrimination |

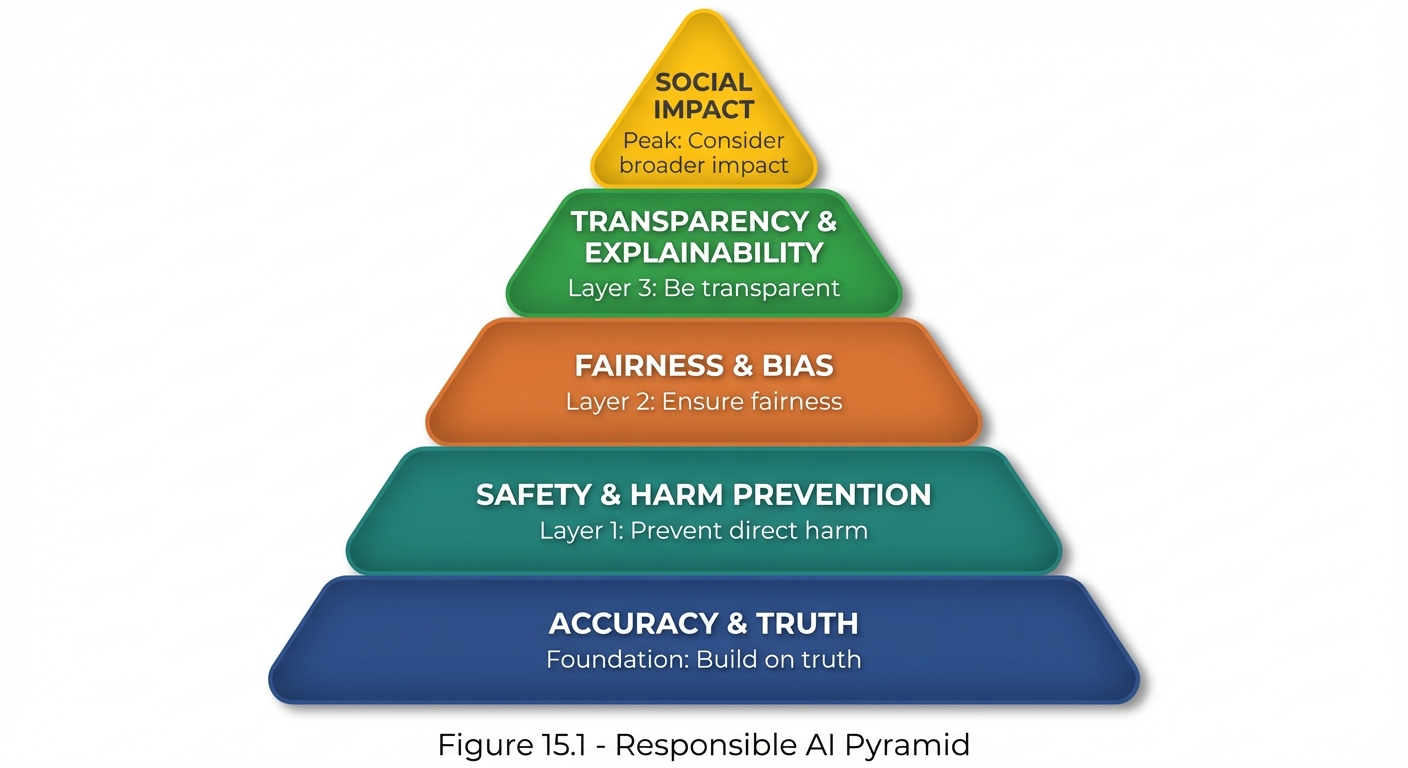

The Responsible AI Pyramid

Figure 15.1: The Responsible AI Pyramid—foundational accuracy supports safety, fairness, transparency, and ultimately positive social impact.

Figure 15.1: The Responsible AI Pyramid—foundational accuracy supports safety, fairness, transparency, and ultimately positive social impact.

Core Ethical Principles

The 6 Principles of Responsible AI Prompting

| # | Principle | Description |

|---|---|---|

| 1 | Accuracy | Prompts should seek truthful, correct information |

| 2 | Safety | Prompts should not enable harm |

| 3 | Fairness | Prompts should not discriminate or bias |

| 4 | Transparency | AI nature and limitations should be clear |

| 5 | Privacy | Personal data should be protected |

| 6 | Accountability | Humans remain responsible for AI use |

Principle Application Matrix

| Principle | In Prompt Design | In Output Review | In Deployment |

|---|---|---|---|

| Accuracy | Verify claims | Fact-check outputs | Monitor accuracy |

| Safety | Avoid harm vectors | Review for harm | Block harmful use |

| Fairness | Check for bias | Audit for discrimination | Test across groups |

| Transparency | Be honest about AI | Don’t fake human | Disclose AI use |

| Privacy | Minimize data | Redact sensitive | Secure handling |

| Accountability | Document decisions | Track outcomes | Own results |

Bias Awareness and Mitigation

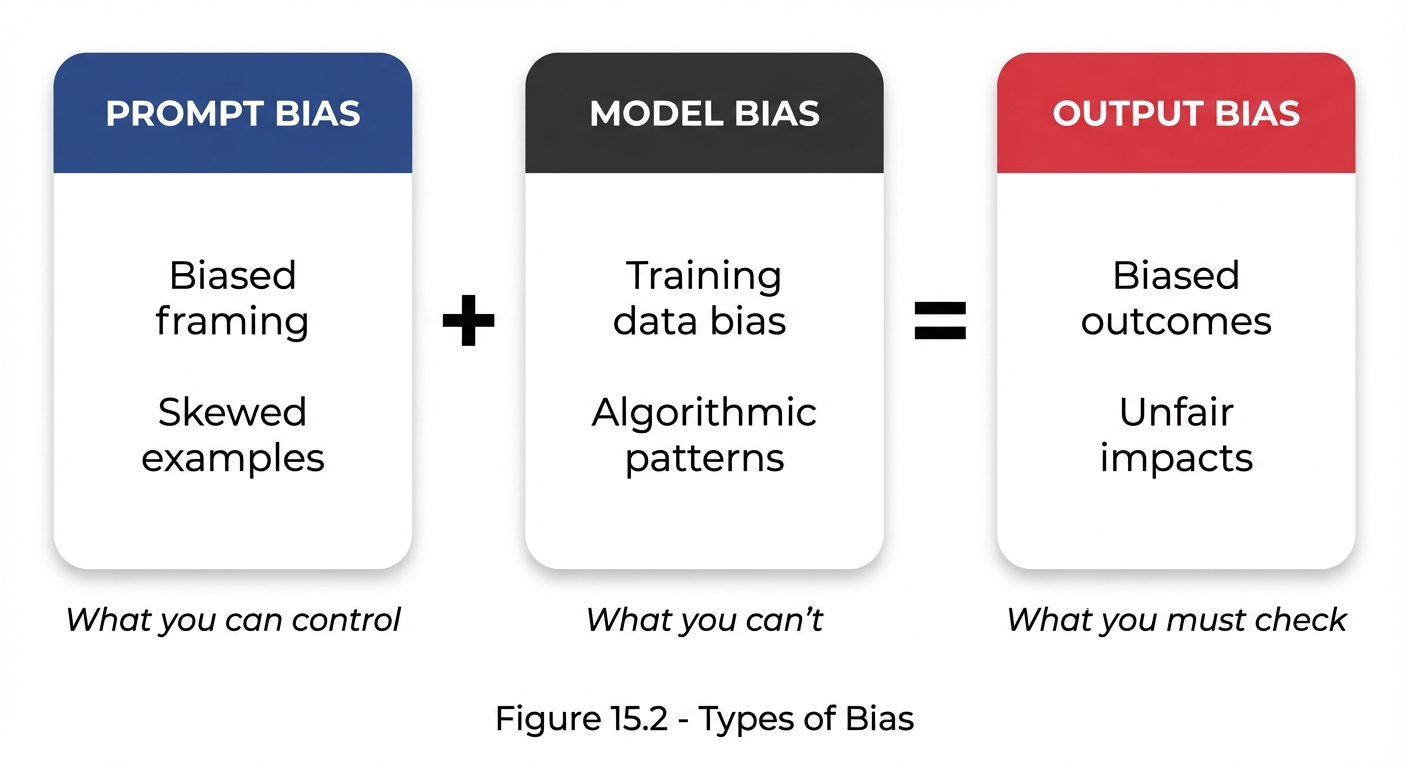

Types of Bias in AI Prompting

Figure 15.2: Bias flows from prompts and models to outputs—control what you can, check what you must.

Figure 15.2: Bias flows from prompts and models to outputs—control what you can, check what you must.

Common Bias Patterns

| Bias Type | Description | Example |

|---|---|---|

| Selection | Non-representative examples | Only positive reviews as examples |

| Confirmation | Seeking expected answers | Leading questions |

| Stereotyping | Assuming group characteristics | Gender in job roles |

| Anchoring | Over-relying on first info | Priming with numbers |

| Framing | How question shapes answer | Positive vs. negative framing |

Bias Identification Checklist

- Do my examples represent diverse perspectives?

- Am I using neutral language (not leading)?

- Would different groups receive equitable treatment?

- Are my assumptions about users warranted?

- Have I tested with diverse inputs?

- Am I avoiding stereotypes in roles/personas?

- Is the framing balanced (not one-sided)?

Bias Mitigation Strategies

1. Diverse Examples

❌ BIASED: Only examples from one demographic

✅ BALANCED: Examples representing diverse perspectives

2. Neutral Framing

❌ BIASED: "What are the benefits of X?"

✅ BALANCED: "What are the advantages and disadvantages of X?"

3. Explicit Fairness Instructions

"Ensure your response:

- Does not assume gender, race, or other characteristics

- Considers diverse perspectives

- Avoids stereotypes

- Would be appropriate for any user"

4. Audit Outputs

Test prompts with different names, scenarios, and framings. Compare outputs for consistency and fairness.

Transparency and Explainability

Why Transparency Matters

Users should know:

- They’re interacting with AI

- AI has limitations

- How outputs were generated

- Confidence levels in responses

Transparency in Practice

Disclosure of AI

Prompt: "Begin your response by noting that you are an AI assistant."

Or include in system prompt:

"Always be transparent that you are an AI when directly asked

or when it's relevant to the conversation."

Acknowledging Limitations

Prompt: "If you're uncertain about any facts, say so clearly.

Indicate which parts of your response are more vs. less confident."

Explaining Reasoning

Prompt: "Show your reasoning process so the user can evaluate

the logic behind your conclusions."

Explainability Requirements

| Situation | Explainability Need | Implementation |

|---|---|---|

| High-stakes decision | Must explain | Require reasoning chain |

| General information | Should explain | Offer to elaborate |

| Creative content | Optional | Explain if asked |

Harm Prevention

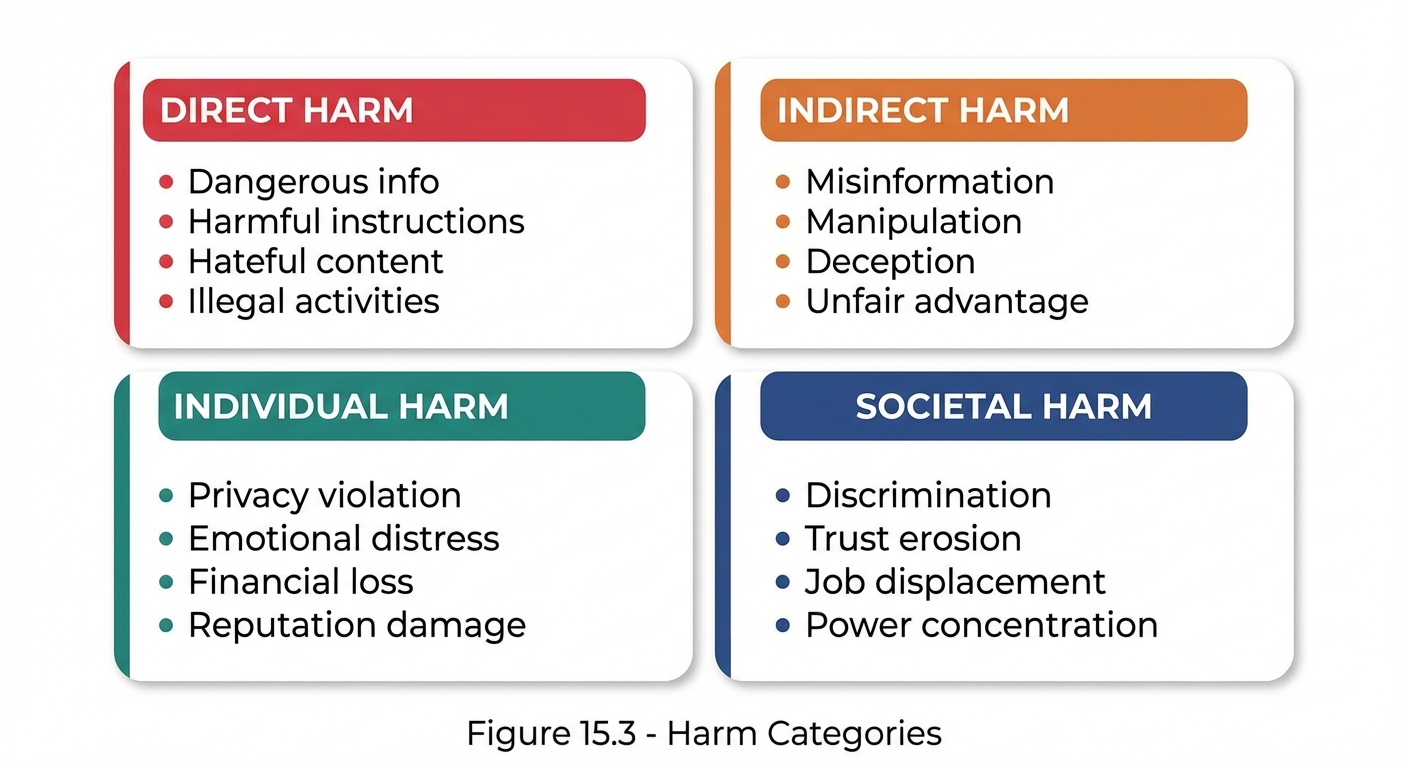

Types of Potential Harm

Figure 15.3: Four categories of potential AI harm—direct, indirect, individual, and societal—that responsible prompting must address.

Figure 15.3: Four categories of potential AI harm—direct, indirect, individual, and societal—that responsible prompting must address.

Harm Prevention Guidelines

1. Don’t Enable Harmful Actions

System Prompt:

"Do not provide instructions for:

- Creating weapons or dangerous substances

- Hacking or unauthorized access

- Fraud, scams, or deception

- Harassment or stalking

- Self-harm or harm to others"

2. Don’t Spread Misinformation

System Prompt:

"When discussing factual matters:

- Distinguish fact from opinion

- Acknowledge uncertainty

- Don't make up information

- Correct misperceptions when appropriate"

3. Don’t Manipulate

System Prompt:

"Maintain user autonomy:

- Present balanced information

- Don't use psychological manipulation

- Support informed decision-making

- Respect user's right to disagree"

Social Impact Considerations

Impact Assessment Questions

Before deploying a prompt at scale, consider:

| Category | Questions |

|---|---|

| Direct | Who will use this? What decisions will it influence? What could go wrong? |

| Indirect | Could this be misused? What groups might be affected? What are unintended consequences? |

| Scale | What happens if used 1M times? Does impact compound? Are errors correctable? |

Use Case Evaluation

| Use Case | Risk Level | Required Safeguards |

|---|---|---|

| Entertainment | Low | Basic safety |

| Education | Medium | Accuracy checks |

| Health advice | High | Disclaimers, referrals |

| Financial decisions | High | Caveats, verification |

| Hiring/screening | Critical | Bias audit, human review |

The 6 Control Objectives

Overview

The 6 Control Objectives provide a governance framework for responsible AI prompting:

| # | Objective | Focus |

|---|---|---|

| 1 | Accuracy Control | Ensure factual correctness |

| 2 | Consistency Control | Maintain reproducible outputs |

| 3 | Safety Control | Prevent harmful content |

| 4 | Privacy Control | Protect sensitive information |

| 5 | Compliance Control | Meet regulatory requirements |

| 6 | Quality Control | Maintain output standards |

Control Implementation Example: Safety

| Element | Details |

|---|---|

| Objective | Prevent harmful, dangerous, or inappropriate content |

| Measures | Content filtering, output review, user feedback, incident response |

| Metrics | Harmful output rate <0.01%, complaints <1 per 10K, response <24hrs |

| Testing | Weekly adversarial, monthly audit, quarterly red team |

Practical Implementation

Pre-Deployment Checklist

| Principle | Verify |

|---|---|

| Accuracy | Facts verified, uncertainty acknowledged, sources cited |

| Safety | Tested for harmful outputs, safeguards in place, edge cases handled |

| Fairness | Tested for bias, diverse perspectives, no stereotypes |

| Transparency | AI nature clear, limitations acknowledged, reasoning explainable |

| Privacy | Minimal data collection, sensitive info protected, handling compliant |

| Accountability | Human oversight defined, feedback mechanism exists, responsibility clear |

Escalation Triggers

Stop and review if:

- Output could cause physical harm

- Content discriminates against protected groups

- Personal information is exposed

- Legal or regulatory concerns arise

- User reports concern or harm

- Unusual patterns detected

Key Takeaways

- Ethical principles provide foundation for responsible AI use

- Bias exists in prompts, models, and outputs—active mitigation required

- Transparency builds trust and enables informed decisions

- Harm prevention is a shared responsibility

- Social impact scales with usage—consider carefully

- Control objectives operationalize responsible AI

Summary

Responsible AI prompting isn’t an afterthought—it’s a foundational requirement. By applying ethical principles, actively checking for bias, maintaining transparency, preventing harm, and considering social impact, you can help ensure that AI serves users well without causing unintended negative consequences. The 6 Control Objectives provide a practical framework for implementing these principles systematically.

Review Questions

- What are the 6 principles of responsible AI prompting?

- How can you mitigate bias in prompts?

- Why is transparency important in AI interactions?

- What are the four categories of potential harm?

- What triggers should cause you to pause and review?

Practical Exercise

Exercise 15.1: Bias Audit

Take a prompt you use regularly and audit it for bias:

- Check for stereotypes

- Test with diverse inputs

- Review for leading language

- Document any issues found

- Create a revised, less biased version

Exercise 15.2: Impact Assessment

Conduct a social impact assessment for a prompt that:

- Provides job interview practice

- Will be used by thousands of users

- Covers various industries and roles

Identify potential issues and propose mitigations.