Chapter 3: The Prompting Pillars Framework

The 7 Pillars That Form the Foundation of Effective AI Communication

Learning Objectives

After completing this chapter, you will be able to:

- Name and define the 7 Prompting Pillars

- Explain how the pillars work together

- Apply each pillar to improve your prompts

- Assess prompts using the pillar framework

- Identify which pillars need strengthening in any given prompt

Introduction to the 7 Prompting Pillars

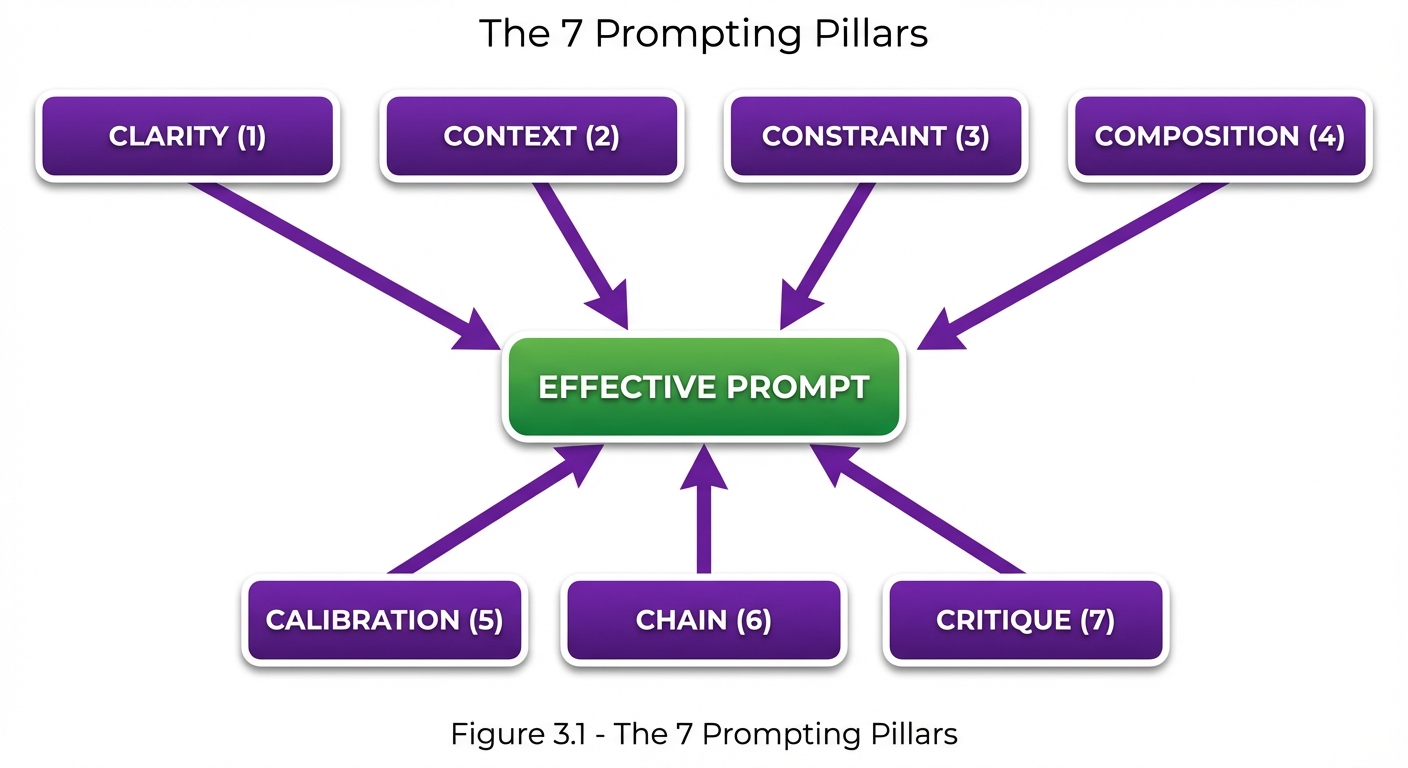

The 7 Prompting Pillars framework provides a comprehensive mental model for constructing effective prompts. Each pillar represents a critical dimension of prompt design. Together, they ensure your prompts are complete, clear, and capable of eliciting the results you need.

The Pillars Overview

Figure 3.1: The 7 Prompting Pillars - A comprehensive framework where seven interconnected pillars work together to create effective prompts. Pillars 1-4 provide structural elements while Pillars 5-7 add refinement and quality assurance.

Figure 3.1: The 7 Prompting Pillars - A comprehensive framework where seven interconnected pillars work together to create effective prompts. Pillars 1-4 provide structural elements while Pillars 5-7 add refinement and quality assurance.

Quick Reference

| # | Pillar | Core Question | Focus |

|---|---|---|---|

| 1 | Clarity | Is the intent unambiguous? | Communication |

| 2 | Context | Is relevant background provided? | Information |

| 3 | Constraint | Are boundaries defined? | Limitations |

| 4 | Composition | Is structure specified? | Format |

| 5 | Calibration | Is tone/style appropriate? | Voice |

| 6 | Chain | Is reasoning enabled? | Logic |

| 7 | Critique | Is self-evaluation built in? | Quality |

Pillar 1: Clarity

Definition

Clarity ensures that your prompt communicates intent without ambiguity. A clear prompt leaves no room for misinterpretation.

Why It Matters

Ambiguous prompts lead to:

- Responses that miss the point

- Multiple interpretations of the same request

- Wasted iterations clarifying intent

Clarity Principles

| Principle | Description | Example |

|---|---|---|

| Specificity | State exactly what you want | “List 5 items” vs. “List some items” |

| Precision | Use exact terms | “Python 3.11” vs. “Python” |

| Directness | Avoid hedging | “Write code” vs. “Could you maybe write code?” |

| Completeness | Include all requirements | “Sort ascending by date” vs. “Sort the list” |

Clarity Assessment

❌ UNCLEAR: "Help me with my code"

- What code? What kind of help?

✅ CLEAR: "Debug the Python function below that should calculate

compound interest but returns incorrect values for

periods greater than 12 months."

- Specific task, language, problem described

Clarity Checklist

- Task is explicitly stated

- No ambiguous pronouns (it, this, that) without clear referents

- Technical terms are precise

- Quantities are specified where relevant

- Success criteria are defined

Pillar 2: Context

Definition

Context provides the background information needed for the AI to understand and appropriately respond to your request.

Why It Matters

Without sufficient context:

- Responses may be generic rather than specific

- Critical assumptions may be wrong

- Output may not fit your actual situation

Types of Context

| Type | Description | Example |

|---|---|---|

| Domain | Field or industry | “In healthcare compliance…” |

| Task | What you’re trying to accomplish | “For a sales presentation…” |

| User | Who you are or represent | “As a junior developer…” |

| System | Technical environment | “Using React 18 with TypeScript…” |

| Historical | Previous relevant information | “Following up on our earlier discussion…” |

Context Loading Pattern

**Domain Context:**

You are helping with financial analysis for a mid-size retail company.

**Task Context:**

We're preparing for Q4 board presentation.

**Data Context:**

Here are the quarterly sales figures: [data]

**Constraint Context:**

The board prefers visual summaries and dislikes jargon.

**Request:**

Create an executive summary of Q4 performance.

Context Calibration

Too little context:

"Write a function to process the data."

Right amount of context:

"Write a Python function that processes customer transaction data

(CSV format with columns: date, customer_id, amount, category)

and returns monthly spending totals by category."

Too much context:

[Unnecessary company history, irrelevant technical details,

personal anecdotes that don't affect the task...]

Pillar 3: Constraint

Definition

Constraint defines the boundaries, limitations, and requirements that the response must adhere to.

Why It Matters

Without constraints:

- Responses may be too long or too short

- Content may include unwanted elements

- Output may violate important requirements

Types of Constraints

| Type | Examples |

|---|---|

| Length | “Maximum 200 words”, “Exactly 5 bullet points” |

| Format | “JSON only”, “No markdown”, “Table format” |

| Content | “No technical jargon”, “Include citations” |

| Scope | “Only discuss X”, “Exclude Y” |

| Style | “Formal language”, “Simple vocabulary” |

| Technical | “Python 3.x only”, “No external dependencies” |

Constraint Specification Pattern

**Constraints:**

- Length: 150-200 words

- Format: Bullet points

- Tone: Professional but approachable

- Must include: Action items

- Must exclude: Technical implementation details

- Audience: C-level executives

Positive vs. Negative Constraints

| Type | Example | Use When |

|---|---|---|

| Positive | “Include a summary” | Requiring specific elements |

| Negative | “Do not include code” | Excluding specific elements |

| Bounded | “Between 100-150 words” | Setting ranges |

Pillar 4: Composition

Definition

Composition specifies the structure, format, and organization of the desired output.

Why It Matters

Clear composition specifications:

- Make outputs immediately usable

- Ensure consistency across responses

- Enable automated processing

Composition Elements

| Element | Description | Example |

|---|---|---|

| Structure | Overall organization | “Three sections: Intro, Body, Conclusion” |

| Format | Output type | “JSON”, “Markdown table”, “Numbered list” |

| Sections | Required parts | “Include: Summary, Details, Next Steps” |

| Hierarchy | Nesting and levels | “H2 for main points, H3 for sub-points” |

Composition Templates

List Format:

Provide your response as a numbered list:

1. [First item]

2. [Second item]

...

Table Format:

Format as a markdown table with columns:

| Item | Description | Priority |

JSON Format:

Return valid JSON with this structure:

{

"summary": "string",

"items": ["array", "of", "strings"],

"confidence": number

}

Structured Sections:

Organize your response with these sections:

## Overview

[High-level summary]

## Details

[In-depth analysis]

## Recommendations

[Actionable next steps]

Pillar 5: Calibration

Definition

Calibration sets the tone, style, persona, and voice for the response.

Why It Matters

Proper calibration ensures:

- Appropriate language for the audience

- Consistent voice across interactions

- Responses that match the situation

Calibration Dimensions

| Dimension | Spectrum |

|---|---|

| Formality | Casual ←→ Formal |

| Complexity | Simple ←→ Technical |

| Emotion | Neutral ←→ Empathetic |

| Confidence | Tentative ←→ Assertive |

| Detail | High-level ←→ Granular |

Calibration Techniques

Role Assignment:

You are a senior software architect with 20 years of experience.

Respond as this expert would.

Audience Specification:

Explain this for a non-technical audience who has never

used a computer terminal.

Tone Direction:

Use an encouraging, supportive tone suitable for a

nervous first-time presenter.

Style Matching:

Match the writing style of the following example:

[Example text]

Calibration Examples

| Scenario | Calibration |

|---|---|

| Executive briefing | Formal, high-level, confident |

| User documentation | Friendly, step-by-step, patient |

| Technical review | Precise, detailed, objective |

| Customer support | Empathetic, solution-focused, clear |

Pillar 6: Chain

Definition

Chain enables logical sequencing, step-by-step reasoning, and structured thinking processes.

Why It Matters

Chain-of-thought approaches:

- Improve accuracy on complex problems

- Make reasoning transparent

- Enable verification of logic

Chain Techniques

| Technique | Description | Trigger Phrase |

|---|---|---|

| Step-by-step | Sequential reasoning | “Think through this step by step” |

| Show work | Visible reasoning | “Show your reasoning” |

| Decomposition | Break into parts | “First analyze X, then Y, then Z” |

| Verification | Self-check | “Verify your answer” |

Chain Patterns

Basic Chain:

Solve this problem step by step, showing your work:

[Problem]

Structured Chain:

Approach this in three phases:

1. UNDERSTAND: Restate the problem in your own words

2. PLAN: Outline your approach

3. EXECUTE: Implement the solution

Verification Chain:

1. Solve the problem

2. Check your solution against the requirements

3. Identify any potential issues

4. Provide your final answer

When to Use Chain

| Task Type | Chain Benefit |

|---|---|

| Math problems | Shows calculation steps |

| Logic puzzles | Reveals reasoning process |

| Complex analysis | Structures thinking |

| Code debugging | Traces through logic |

| Decision making | Documents factors considered |

Pillar 7: Critique

Definition

Critique builds in self-evaluation, quality checks, and iterative refinement.

Why It Matters

Built-in critique:

- Catches errors before delivery

- Improves output quality

- Enables self-correction

Critique Techniques

| Technique | Description | Example |

|---|---|---|

| Self-review | Check own work | “Review your response for errors” |

| Alternative check | Consider other options | “What might you have missed?” |

| Quality rating | Self-assess | “Rate confidence 1-10” |

| Assumption check | Surface assumptions | “What assumptions are you making?” |

Critique Patterns

Post-Response Review:

After providing your answer:

1. Review for factual accuracy

2. Check for completeness

3. Note any uncertainties

Confidence Indication:

End your response with:

- Confidence level (High/Medium/Low)

- Any caveats or limitations

- Suggested verification steps

Alternative Consideration:

After your recommendation:

1. State the main recommendation

2. Provide one alternative approach

3. Explain trade-offs between them

Pillar Integration

How the Pillars Work Together

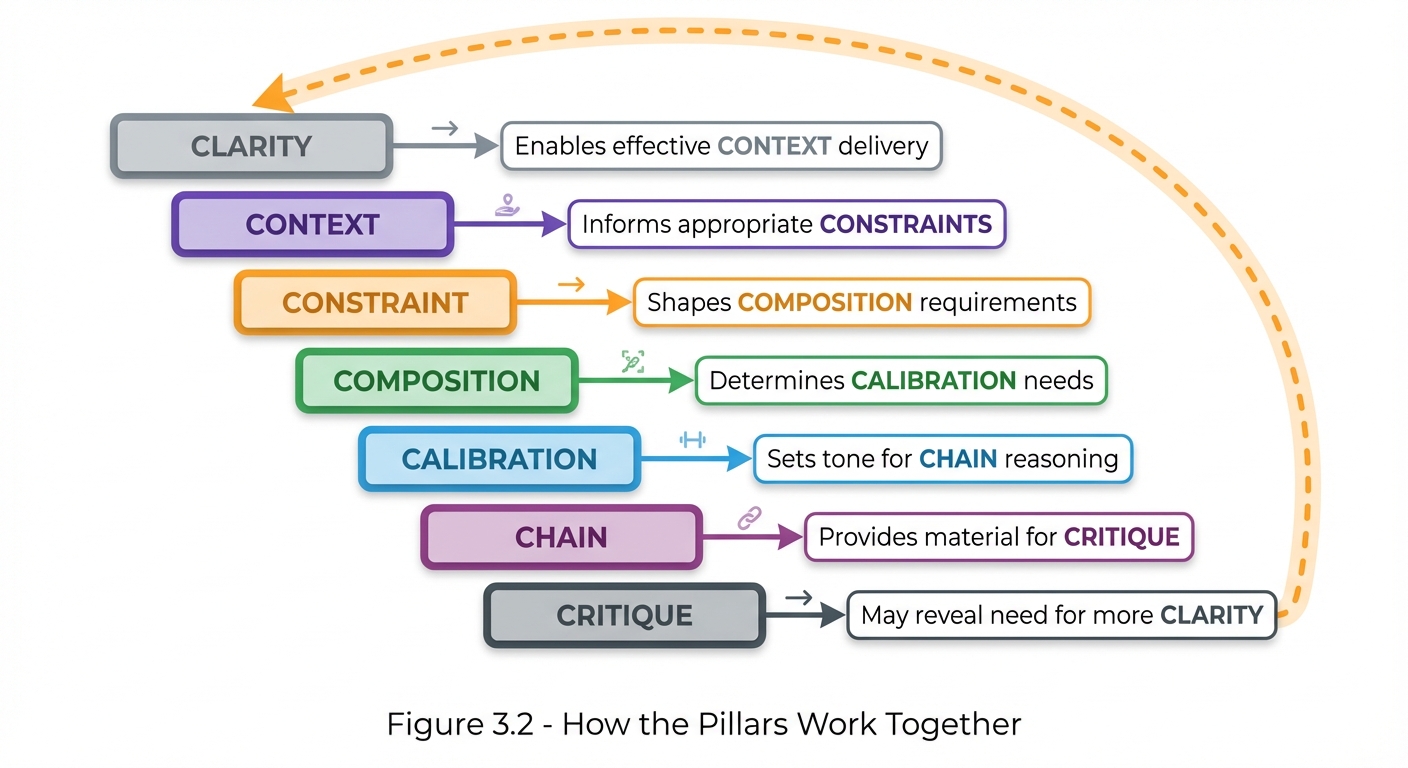

The pillars are not isolated—they interact and reinforce each other:

Figure 3.2: Pillar Interactions - Each pillar builds upon and enables the next in a continuous improvement cycle. Clear intent enables context delivery, which informs constraints, and so on through critique, which may reveal the need for additional clarity.

Figure 3.2: Pillar Interactions - Each pillar builds upon and enables the next in a continuous improvement cycle. Clear intent enables context delivery, which informs constraints, and so on through critique, which may reveal the need for additional clarity.

Complete Example

Here’s a prompt that integrates all seven pillars:

**CONTEXT (Pillar 2):**

You are a senior data scientist at a fintech company. We're building

a fraud detection system for credit card transactions.

**CLARITY (Pillar 1):**

Design a feature engineering pipeline that extracts behavioral

patterns from transaction data to identify anomalies.

**CONSTRAINTS (Pillar 3):**

- Must process 10,000 transactions per second

- Cannot use customer PII directly

- Must be explainable for regulatory compliance

- Implementation in Python

**COMPOSITION (Pillar 4):**

Structure your response as:

1. Feature Categories (table format)

2. Implementation Approach (numbered steps)

3. Example Code (Python)

4. Validation Strategy

**CALIBRATION (Pillar 5):**

Write for a technical audience familiar with ML but new to fraud detection.

Use precise terminology but explain domain-specific concepts.

**CHAIN (Pillar 6):**

Think through this systematically:

- First, identify what transaction patterns indicate fraud

- Then, determine how to encode these as features

- Finally, consider computational efficiency

**CRITIQUE (Pillar 7):**

After your design, evaluate:

- Potential blind spots in the feature set

- Scalability concerns

- Regulatory compliance risks

Pillar Assessment Tool

Rate Your Prompts

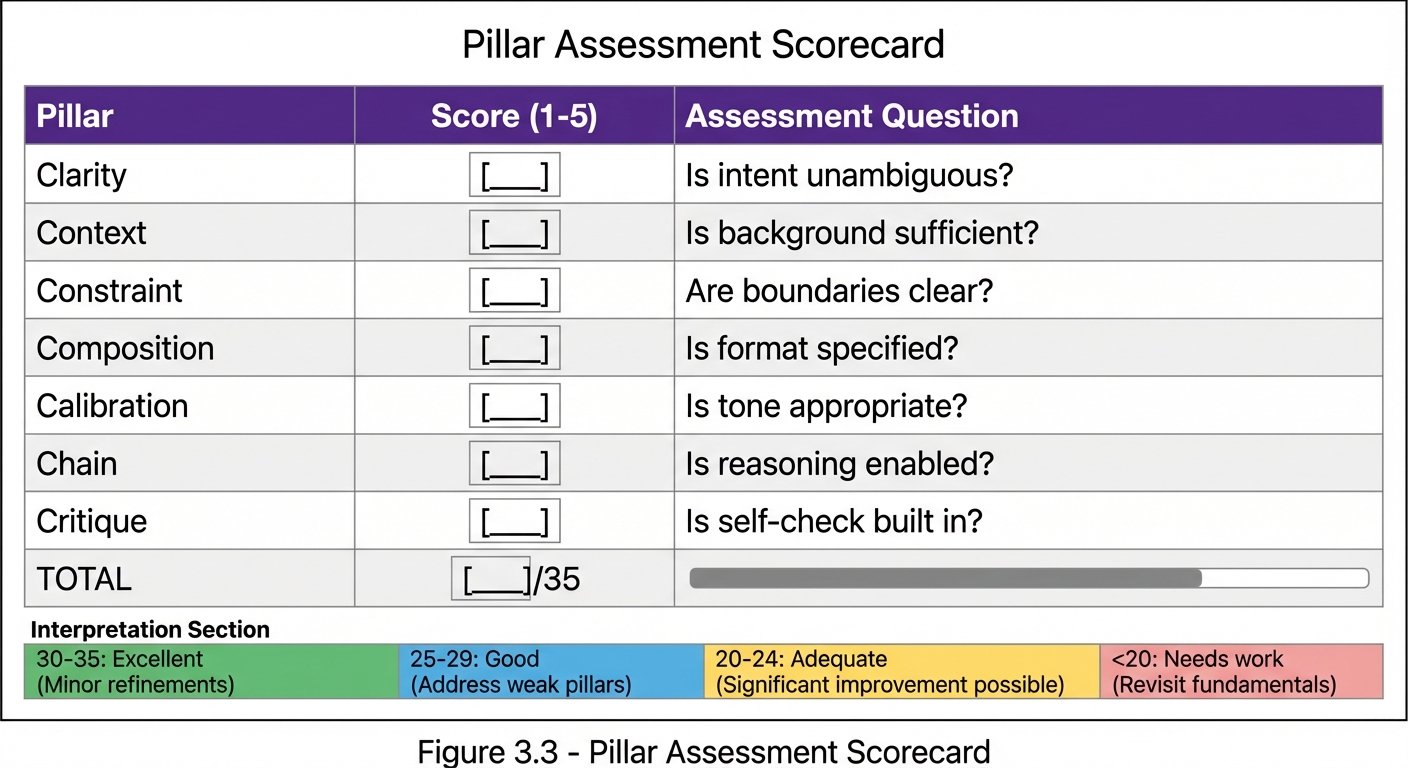

For any prompt, rate each pillar 1-5:

| Pillar | Score (1-5) | Notes |

|---|---|---|

| Clarity | ___ | Is intent unambiguous? |

| Context | ___ | Is background sufficient? |

| Constraint | ___ | Are boundaries clear? |

| Composition | ___ | Is format specified? |

| Calibration | ___ | Is tone appropriate? |

| Chain | ___ | Is reasoning enabled? |

| Critique | ___ | Is self-check built in? |

| Total | ___/35 |

Figure 3.3: Pillar Assessment Scorecard - Use this tool to evaluate any prompt against the 7 Pillars. Rate each pillar 1-5, calculate your total, and use the interpretation guide to identify areas for improvement.

Figure 3.3: Pillar Assessment Scorecard - Use this tool to evaluate any prompt against the 7 Pillars. Rate each pillar 1-5, calculate your total, and use the interpretation guide to identify areas for improvement.

Interpretation

| Score | Level | Action |

|---|---|---|

| 30-35 | Excellent | Minor refinements |

| 25-29 | Good | Address weak pillars |

| 20-24 | Adequate | Significant improvement possible |

| <20 | Needs work | Revisit fundamentals |

Key Takeaways

- The 7 Prompting Pillars provide a comprehensive framework for prompt design

- Clarity ensures unambiguous communication

- Context provides necessary background

- Constraint defines boundaries

- Composition specifies structure

- Calibration sets tone and style

- Chain enables reasoning

- Critique builds in quality checks

- Pillars work together—strengthen all for best results

Summary

The 7 Prompting Pillars framework transforms prompt creation from an ad-hoc activity into a systematic process. By considering each pillar—Clarity, Context, Constraint, Composition, Calibration, Chain, and Critique—you ensure your prompts are comprehensive, effective, and capable of eliciting high-quality responses. Use this framework as a checklist for every important prompt you craft.

Review Questions

- Name all 7 Prompting Pillars and their core focus areas.

- What are three types of context you might include in a prompt?

- How do positive and negative constraints differ?

- When is the Chain pillar most valuable?

- What techniques implement the Critique pillar?

Practical Exercise

Exercise 3.1: Pillar Analysis

Take a recent prompt you’ve used and analyze it against each pillar. Identify which pillars are strong and which need improvement. Then rewrite the prompt to address the weak areas.

Exercise 3.2: Pillar Building

Start with this basic prompt and add elements for each pillar:

Basic: “Write a blog post about AI.”

Enhanced with all 7 pillars: [Your improved version]